Transition statements are a common recommendation in survey design, often used to signal when a questionnaire is shifting from one topic to another. The logic is straightforward: smoother transitions should make surveys feel more coherent, reduce confusion, and perhaps even improve data quality. But while that advice makes intuitive sense, especially in interviewer-led surveys, there is limited evidence that it matters in self-administered online surveys.

This experiment, part of our ongoing Methodology matters series, evaluates whether transition statements actually improve the online survey experience. Using an embedded experiment in a YouGov survey, we test whether transitions affect respondent enjoyment, flow, confusion, data quality, and survey duration. The results suggest that transitions are largely harmless, but also mostly unnecessary, in this type of online survey setting.

Summary and takeaway

Many survey vendors and researchers recommend including transition statements between topics to improve respondent experience and survey data quality. In an experiment embedded in a YouGov survey, we do not find evidence that transitions in multi-topic surveys improve respondent experience or enhance data quality, but nor do they increase survey duration. In short, transitions seem harmless but superfluous in the online survey context: respondents appear comfortable moving across disparate topics without extra signposting.

>View data collection methodology statement

Introduction and background

One of the major guidelines in online survey research is to ensure that a survey transitions smoothly between topics. These transitions are typically short statements that signal an upcoming shift. Ipsos recommends that “[w]hen changing topics, use very brief transition sentences or phrases.” Qualtrics advises that “rather than ask respondents a string of random, disconnected questions, surveys should follow a logical flow, with smooth transitions between questions.” And according to Cint (formerly Lucid), “[m]ixing statements and questions. . . keeps respondents engaged.”

These recommendations trace back to best practices developed for interviewer-administered surveys, both in-person and over the phone. In those settings, it would violate conversational norms for an interviewer to abruptly jump from one topic to another without warning. In that context, transitions make sense: they maintain rapport, orient the respondent, and keep the interview feeling like a coherent conversation.

With the shift to self-administered online surveys, these recommendations have largely been carried over. However, there has been relatively little research into whether transition statements actually produce the intended benefits in online settings. In theory, online respondents who lack an interviewer to guide them might especially benefit from transitions that signal shifts in topic. Transitions might also make online surveys feel more like guided interactions, potentially improving respondent satisfaction and response quality.

But it’s also possible that transitions are unnecessary or even counterproductive in online surveys. Transitions might interrupt flow or add unnecessary friction rather than provide much-needed guidance—especially for experienced survey respondents. Indeed, the one study to experimentally test transitions in online surveys finds that they backfired, increasing break-offs and survey duration (Fu, Hughes, and Park 2022).

Overall, the theoretical expectations for transitions in online surveys are mixed, and the empirical basis for recommending them remains thin. We contribute to this limited evidence base by experimentally evaluating whether transition statements have their intended effects in online survey contexts.

Hypothesis

Building on prevailing narratives about best practice, we pre-registered hypotheses that transition statements would have positive effects in online surveys. Specifically, we expected transitions to improve respondent experience in the form of higher enjoyment, better perceived flow, and reduced confusion. We also anticipated improvements in response quality by way of greater attention and effort. However, because transitions add additional text to read, we also hypothesized that their inclusion would increase survey duration.

Hypothesis 1 (Respondent Experience): Transitions will improve respondents’ survey experiences.

- H1a: Transitions will increase respondents’ evaluations of survey enjoyment.

- H1b: Transitions will increase respondents’ evaluations of survey flow.

- H1c: Transitions will decrease respondents’ self-reported confusion.

Hypothesis 2 (Survey Duration): Transitions will increase overall survey duration.

Hypothesis 3 (Non-Response): Transitions will decrease item- and unit-level non-response.

- H3a: Transitions will decrease item non-response.

- H3b: Transitions will decrease break-offs (partial unit non-response).

Hypothesis 4 (Future Participation): Transitions will increase willingness to participate in future surveys (preregistered but not tested due to limitations in the available data).

Hypothesis 5 (Data Quality): Transitions will increase response quality.

- H5a: Transitions will increase respondent attentiveness.

- H5b: Transitions will decrease straightlining on grid-format questions.

Research design

We randomly assigned each respondent to one of three conditions:

- No Transition: no transitional text.

- Same Page Transition: transitional text shown above the first question in each module.

- Separate Page Transition: transitional text shown on its own page immediately before each module.

The treatment conditions reflect two common placement strategies for transitions. Some guidance recommends placing transitions on the same page as the questions they introduce (e.g., Ipsos: “transitions [should be] on the same screens as the next topic”), while other guidance allows for transitions to appear on a separate screen (e.g., GESIS: introductory text “should be placed either at the top of a survey page with the next question or on a separate survey page”). Because professional recommendations are inconsistent, we implement both versions and remain agnostic as to which, if either, should perform better.

The survey was fielded by YouGov’s Scientific Research Group in July 2025 (n = 2,508). The survey contained three multi-question modules, covering three distinct topics: food preferences, family relations, and socio-demographic information. Each respondent received the same assigned condition across all three modules. For example, the transition statement for the food preferences module read:

"In this section of the survey, we are going to ask you about food.”

- In the Same Page Transition condition, this sentence appeared above the first food question.

- In the Separate Page Transition condition, it appeared on its own page before the first food question.

- In the No Transition condition, respondents proceeded directly to the first question: “How do you feel about the following types of food?”

The study was preregistered on the Open Science Framework here prior to data access.

Analysis plan

To estimate treatment effects, we fit a series of regression models with ordinary least squares (OLS), two for each outcome. The first model tests the effects of each treatment condition separately. For example, to test H1a, we regress Enjoyment on indicators for the two transition conditions (Same Page, Separate Page). The second model uses a single treatment indicator (Any Transition) that pools the two treatment arms. The second model was not preregistered but provides useful omnibus estimates of the effect of transitions. Every model also includes a set of pre-treatment covariates meant to absorb residual variance and increase precision when estimating treatment effects.

We use two-tailed hypothesis tests with a significance threshold of p<0.05. Although our hypotheses are directional, we do not use one-tailed tests because our priors are not strong enough to rule out the possibility of effects in the opposite direction.

Results

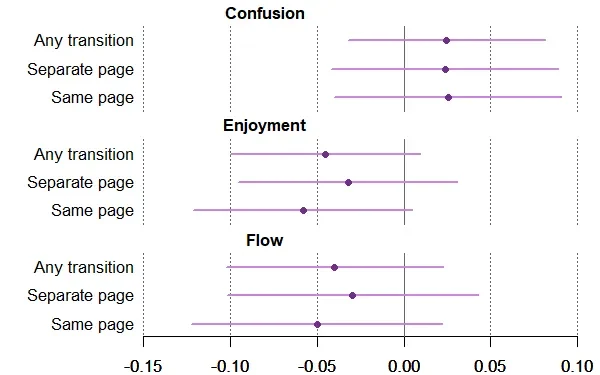

We estimate the average treatment effects of each of the two transition conditions relative to the no transitions baseline, as well as combined estimates that pool the two treatment arms. Figure 1 presents the estimated effects (with 95% confidence intervals) for the three measures of respondent experience. Outcome variables take higher values the better the enjoyment, the better the flow, and the lower the confusion. Each of these outcome questions was asked at the end of the survey, after respondents had either encountered all three transitions or none.

We find no statistically significant effects of transitions on respondent experience. Across enjoyment, flow, and confusion, all estimates are small in magnitude. Further, the effects point in different directions across metrics: transitions have positive effects on confusion (i.e., confusion decreases), but negative effects on enjoyment and flow. These results suggest that transitions do not meaningfully affect respondent experience in the YouGov survey.

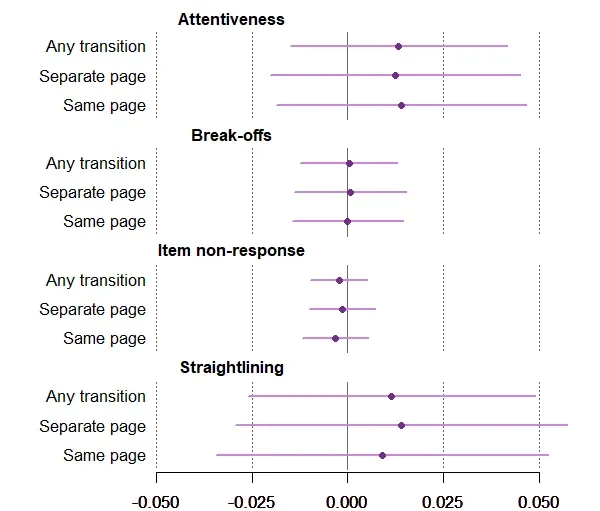

Figure 2 shows results for the four survey data quality metrics. Attentiveness is measured using a post-treatment attention check that required selecting two contradictory responses. Break-offs are respondents who leave the survey after it has started. Item non-response is the total number of survey items skipped. Straight-lining is the (inverse) response entropy to the post-treatment grid-format questions about 20 different food items; higher entropy reflects more varied responses and thus less straight-lining.

We find that the inclusion of transitions has no significant effect on any data quality metrics. YouGov respondents are similarly attentive, effortful, and unlikely to exit the survey or skip questions regardless of whether transitions are used between topics.

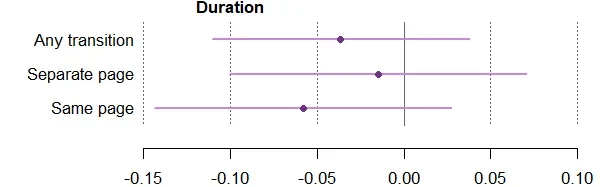

Finally, Figure 3 shows the effects of including transitions on average survey duration. The median survey completion time was just over 6 minutes. Given the right-skewed distribution of duration, we log survey duration (in seconds) as our outcome measure. Contrary to our expectations, we do not find that transitions increase survey duration, even in the separate page condition where respondents have to click through three additional survey screens.

Optimistically, this null could mean that transitions help respondents cognitively reorient between topics, offsetting the added word count by smoothing topic shifts. Alternatively, if respondents skim transitions, the added text may not generate measurable time costs. Our estimates are consistent with both possibilities.

Conclusion

Our results suggest that, for typical online panel surveys, transitions are optional rather than essential. Respondents seem okay answering a series of varied questions without much hand-holding, and adding brief topic introductions does not move the needle on experience, data quality, or survey length in this setting.

That said, our study focuses on a short survey (~6min) and draws from YouGov’s panel of comparatively experienced respondents. Transitions may still matter more in other contexts—for example, very long surveys, surveys with unfamiliar topics, or samples with many first-time respondents. Future research could examine those contexts to explore the conditions under which transitions might help or hurt. For most standard online panel work, though, researchers likely do not need to worry much about the consequences of including or omitting transitions. The choice of whether and how to include them is up to the researcher’s own preference.

About the author

Jae-Hee Jung, Assistant Professor, Department of Political Science, Rice University

Jae-Hee Jung is an assistant professor in the Department of Political Science at Rice University. She received her Ph.D. from Washington University in St. Louis. Her research is in comparative party politics, political behavior, and moral and political psychology.

Trent Ollerenshaw, Assistant Professor, Department of Political Science, University of Houston

Trent Ollerenshaw is an assistant professor in the Department of Political Science at the University of Houston. He received his Ph.D. from Duke University. His research focuses on American public opinion, political psychology, and survey methodology.

About Methodology matters

Methodology matters is a series of research experiments focused on survey experience and survey measurement. The series aims to contribute to the academic and professional understanding of the online survey experience and promote best practices among researchers. Academic researchers are invited to submit their own research design and hypotheses.