YouGov BrandIndex Voices represents a new form of data: AI-led interviews that help you move from what people said about a brand metric to why they said it – fast, across hundreds of people, with depth that would previously take weeks (sometimes months) to compile and interpret properly.

While speed is important, it isn’t the primary value. What matters more is what our approach enables: a method for building qualitative understanding at scale, without abandoning the research disciplines that make insights trustworthy in the first place.

And none of it works without panel quality. As more and more providers claim their data is “real” or “human,” the reality is simple: if you don’t own the panel, you don’t control recruitment, engagement, or quality and the data will always be questionable. YouGov was founded on a belief that owning the panel is essential, and Voices is built on top of that foundation.

This article walks through the journey end-to-end: how BrandIndex Voices is commissioned, how the AI Interviewer runs the interviews, how the analysis works, and how we quality-check what we publish. This is an important part of our commitment to transparency, and we’re excited to share it with you.

Step 1: Commissioning the right interview

BrandIndex Voices is designed to help explain movement in brand metrics by exploring the “why” behind BrandIndex responses.

Clients don’t write a bespoke interview script. Instead, Voices uses templated opening questions that are designed to support research quality, neutrality, and consistency. You control what you want to explore (for example: the metric and the audience you want to understand). YouGov controls the wording and structure of the opening question to reduce the risk of bias, leading language, or inconsistent outputs.

This matters, because in AI interviewing, the first question doesn’t just begin the conversation it sets the tone, the boundaries and the validity of everything that follows.

Step 2: The AI Interviewer runs a short, semi-structured interview

When it comes time to conduct the interview, this is where the AI Interviewer (also referred to as the YouGov Interviewer) comes in.

Voices uses the AI Interviewer to run short, semi-structured follow-up interviews with YouGov members based on their BrandIndex survey answers. The goal is straightforward: explore why people hold a particular view, not just what they think.

Typical length - and why it varies

An AI Interviewer session will have a maximum number of questions - 12. Conversations can be shorter when:

- the discussion reaches a natural conclusion

- participant engagement is low

- extremely sensitive, unsafe, or inappropriate content is shared.

Participants can also end the conversation earlier themselves.

Adaptive questioning, not a rigid script

The Interviewer doesn’t follow a fixed script. It uses an interview guide structure (similar in spirit to a qualitative interview) where early questions open people up, and later questions dig into the “why.”

Interview guides can include branching: the Interviewer can pursue different avenues depending on what the participant says, while still reverting back to the research objective to determine what to ask next.

This is a key difference versus a static open-ended question. A single open-end can only ask one targeted prompt. A conversational interview can start broad and then build depth across:

- what’s driving the view

- experiences shaping perception

- how people compare brands in context

- what matters most to them personally

Taken together, the output is richer than a single-response open end because it’s closer to an interview.

Step 3: The “core DNA” that protects neutrality and research integrity

BrandIndex Voices is built around a set of fixed prompt elements (often referred to internally as the Interviewer’s core DNA) that are designed to stay consistent across deployments. These fixed sections exist because the most important rules shouldn’t vary from project to project.

That core DNA covers:

- Role clarity: the AI’s role is a market research interviewer (not a conversational chatbot)

- Neutrality and impartiality: interview rules are designed to minimize bias and leading language

- Questions-only behavior: the Interviewer is instructed to only ask questions. It avoids conversational “validation” such as “that’s interesting” or “that must have been hard.” The result is an interview (question and answer), not a free-form conversation.

- Clear boundaries on behavior: system instructions define what the Interviewer can and cannot do (for example: no advice, no factual claims, no challenging or commenting on participant opinion)

- Plain language: tone and communication are designed to be clear, simple, and jargon-free

- Sensitive topic management and data minimization: the Interviewer can steer back to the research objective, and it has clear instructions for when and how to end a conversation

- Clear end rules: the conversation can end if it’s unsafe or inappropriate, if the participant instructs it to end, if the topic becomes very sensitive, or if the participant is in danger (including risk of self-harm)

One important caveat sits alongside all of this: the Interviewer doesn’t follow a script. Like human interviewers, it can make mistakes. The purpose of the core DNA is to keep the research disciplined even when the path through the interview is adaptive.

Step 4: From conversations to themes, summaries, and quotes

After interviewing comes the part, most people understandably have questions about: analysis.

At a high level, YouGov processes conversational data using a design that mixes structured data processing with large language model (LLM) capabilities supported by prompt engineering, aggregation, and metric calculations. The exact implementation evolves as the solution is finalized for production, but the shape of the process is consistent.

A representative end-to-end flow looks like:

- Fetch conversations and metadata (the conversation plus relevant context about the question)

- Topic identification (extract the main topics across all interviews)

- Conversation analysis (analyze each interview: topic mentions, candidate quotes, and other per-conversation outputs)

- Aggregation (calculate quantitative rollups based on the extracted information)

- Summarisation and quote selection (generate qualitative outputs based on the above)

- Format and return the results

Why conversation analysis is different from static open ends

Conversational data is not “a longer open end.” It behaves differently:

- multiple topics (and context shifts) can appear in one interview

- meaning is context-dependent (what a phrase refers to can change within a single exchange)

- emotion can be extracted more reliably from richer context

- interviews contain more depth and variability than single-question responses

This is why Voices is designed as a full analysis flow rather than a simple “summarize responses” layer.

Step 5: What you see in BrandIndex Voices

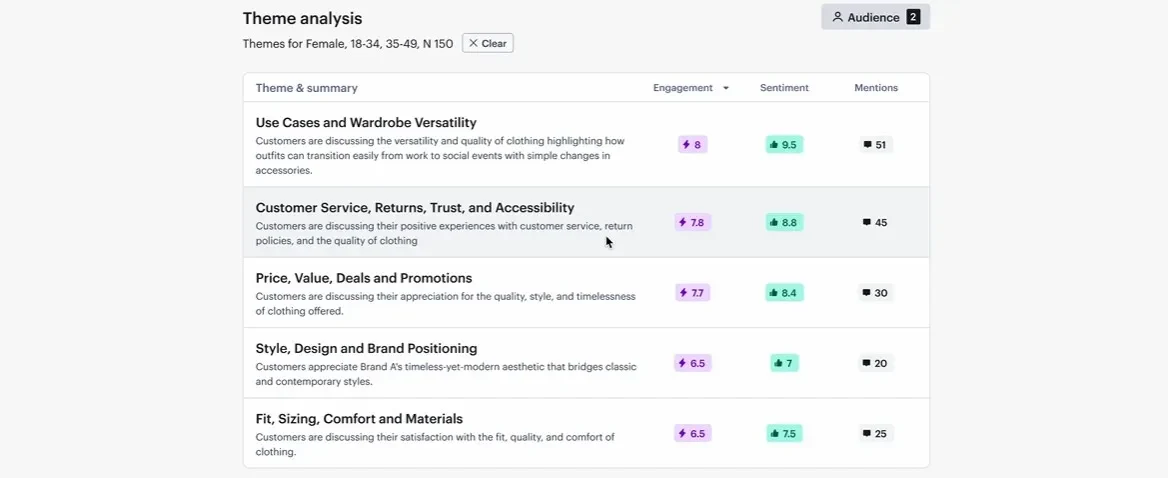

Within BrandIndex, Voices returns AI analysis of the conversations in a structured, audit-friendly way:

- Summary: overall engagement score, sentiment score, number of conversations, and a short summary of key insights

- Themes: themes that came up frequently, grouped by engagement score and number of conversations

- Selected customer quotes: deep-dive into themes with relevant quotes per theme

Importantly, YouGov does not publish full respondent-level transcripts in the product. Instead, you access selected quotes per theme—typically three to five—alongside executive and theme summaries.

Step 6: Quality assurance without the black box

Trust isn’t a message to us. It’s an operating principle.

Voices is designed so that outputs are not “AI opinions.” They are structured interpretations grounded in underlying interviews, with quality checks built around traceability and consistency.

At a high level, quality assurance includes:

- Traceability: themes, quotes, and summaries are designed to link back to the underlying conversations and messages they came from. This adds transparency and enables validation (AI or human).

- Automated quality checks: checks are benchmarked using human-labelled responses during development and used to monitor consistency over time.

- Model upgrade testing: when underlying models are upgraded, performance is tested to ensure results remain consistent.

- No hallucinated quotes: quotes are pulled directly from actual conversations, not generated.

This is how we aim to deliver what the market is asking for right now: speed and scale, without sacrificing the fundamentals of research integrity.

Step 7: The two scores—and what they mean

Voices reports two core output scores (current as of data of publication, and likely to evolve):

- Sentiment score (1–10): measures the overall emotional tone expressed toward a topic.

- Engagement score (1–10): measures how “interested” or “involved” participants are with a topic.

They work together: sentiment tells you whether the feeling is positive or negative; engagement tells you whether people care.

Why Voices matters

BrandIndex Voices is built to answer a simple demand: show your working.

AI can unlock a new category of insight that’s fast, and at scale but only if the method is disciplined, the outputs are structured, and the data foundation is real. Voices is YouGov’s approach to making that possible: AI interviewing and analysis that sits on top of a panel we own, control, and continuously work to keep at the quality levels required for accurate research.